Let’s face it, while data science was named the “sexiest job of the 21st century,” the majority of people still shudder at even the mention of statistics. The root of why this discipline has been so alienating throughout the course of its history can be found with its close relationship with mathematics.

Whether you believe you can’t learn statistical analysis or are simply curious to learn more about it, this guide will help get you started by laying out the core introductory concepts.

At the heart of statistics are the five essential concepts of statistics, and form the basis for data analysis. The first four can be dealt with without going into much detail about their equations:

- Mean: the average value, calculated as the sum of all observations over the number of observations

- Median: the midpoint of the dataset, calculated by ordering all observations from least to greatest and taking the value directly in the middle

- Variance: the general spread of the data, calculated as the average of squared differences of the mean

- Standard Deviation: also a measure of spread, calculated by taking the square root of the variance

Much like witnesses in a detective novel, these four concepts start to tell you the story of a particular set of data because they are descriptive statistics. For example, if you look around at the people in any restaurant you find yourself in, it can be very difficult to build a narrative, or interpretation, about the kind of crowd you’re surrounded by based solely on appearance.

Say, however, you are given information about their age, monthly income, level of education, gender, and taste of music. The first two concepts, the mean and the median, are both measures of central tendency that can tell you whether your crowd is mostly twenty-somethings making their way through college or wealthy, elderly people that invest in hedge funds.

The difference between when you use these concepts depends on the distribution of the variable that you’re measuring or, in this example, the amount of variability within the crowd. The more alike the crowd is, the more accurate taking the mean will be in telling your story; the more variation between the people are, the more accurate the picture you draw will be by taking the mean.

The variance and standard deviation are both measures of variability and can tell you how different each observation in your data are from the average with regards to a specific variable.

If you wanted to see how similar the crowd is in terms of age, you would start the computation by calculating the mean age and, by subtracting every individual’s age from it, find a number that tells you how far people are spread from the average. The standard deviation, on the other hand, gives you how far or close your data is clustered around the mean based on a normal distribution.

The standard deviation is exactly like the variance in terms of what it says about the spread of your data – in fact, the standard deviation is calculated by taking the square root of the variance. The difference lies in the fact that the standard deviation the descriptive measure that is easiest to report because it is in the same units as the original data, whereas the variance is not.

You can test what you've learned in your online statistics course so far by attempting some statistics practice problems online!

What is Probability?

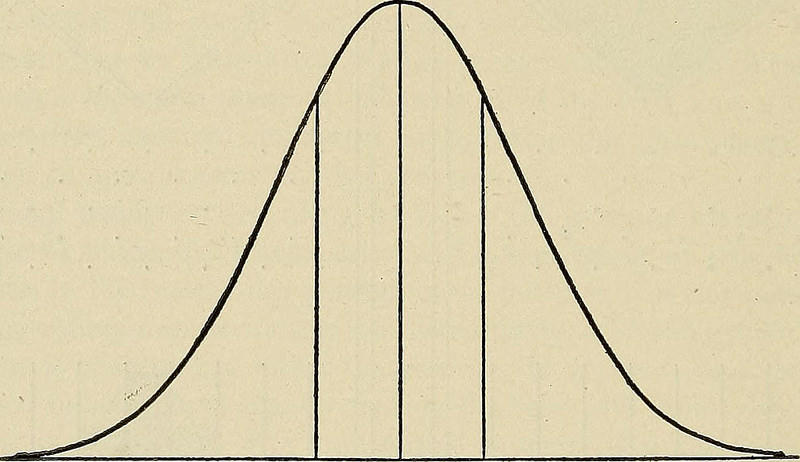

Now that you’ve mastered the four basic concepts, it’s time to discuss the fifth and most important building block of statistics: probability theory. This is normally where people go running for the hills when, in reality, probability theory is only used for the purpose of understanding the most important graph you will ever see at the beginning of your statistics journey:

This graph represents a normal probability distribution, or normal distribution, where the data is arranged symmetrically around the mean. In other words, probability is used to understand the central limit theorem or CLT.

The CLT is defined as the idea that when an infinite amount of successive random samples are drawn from the population, the sampling distribution of those means will approach a normal distribution.

In other words, regardless of what the population distribution looks like, the mean and standard deviation will become normal with the more samples that are drawn, looking like the graph above. Understanding probability not only gives us the language to talk about sample distribution but is the very tool that lets us calculate it.

Get a vice data science course Toronto here.

How to Choose a Statistical Test

Once you’ve familiarized yourself with all of the basics, and understand the fundamental concepts of statistics, it can be difficult to tackle the next step – which is, deciding what test to run with your specific set of data. While there is a wide array of statistical tests and approaches available, they can be boiled down into four distinct categories of tests for:

- Association

- Comparison

- Prediction

- Data that doesn’t follow a normal distribution, or nonparametric

In order to decide which tests to perform, it is first important to distinguish between the types of data you have based on the variables you are analyzing. Variables can be either scale or categorical variables.

Scale variables are quantitative and fall under two categories;

- Continuous: can take on any value, like height

- Discrete: are integers, like the number of children

Categorical variables are qualitative and also fall under two distinct categories:

- Ordinal: has an obvious order, like a scale rating happiness from 1 to 10

- Nominal: has no meaningful order, like gender

When to Use Tests of Association

These types of tests are meant for looking at the relationship between two variables. It is the closest you'll get to looking at causality between two variables. For example, you want to discover if there is an association between marital status and level of education. All of these test the strength of the association between two variables:

| Type of Test | Type of Variables | Example |

|---|---|---|

| Pearson Correlation | Two continuous variables | If shoe size has an association with height |

| Spearman Correlation | Two ordinal variables | How strong of an association there is between happiness and economic status |

| Chi-Square | Two categorical variables | To see whether gender and favorite color have any association |

Tests of Comparison between Means

Tests of comparison deal with looking at the differences between different variables by looking at the difference between their means. For example, you want to see if where one goes to school makes a difference on standardized test scores.

| Type of Test | Type of Variables | Example |

|---|---|---|

| Paired T-Test | Two related variables | The difference between weight before and after taking new supplement |

| Independent T-Test | Two independent variables | The difference in spending on gas between people Los Angeles and New York |

| One-Way Analysis of Variance (ANOVA) | One independent variable with distinct levels and one continuous variable | Comparing the means of test scores from three different levels of education |

| Two-Way ANOVA | Two or more independent variables with distinct levels and one continuous variable | Comparing the means of test scores from both three levels of education and twelve different zodiac signs |

Tests of Prediction using Linear Regression

Prediction tests are used to determine whether a change in one or more variables the change in another. For example, given data on gender, diet and income you can investigate whether a change in these leads to a change in height.

| Type of Test | Type of Variable | Example |

|---|---|---|

| Simple Linear Regression | One scale variable (dependent) with one or two scale variables (predictors) | You want to see if and how well age and height predict weight |

| Multiple Linear Regression | One scale variable (dependent) with two or more scale variables (predictors) | You want to see if and how well age, height, and income predict weight |

Tests for Nonparametric Data

These tests should be performed when the data does not meet the assumptions for the other tests. For example, when the data does not follow a normal distribution and is highly skewed.

| Type of Test | Type of Variable | Example |

|---|---|---|

| Wilcoxon Rank-Sum Test | Two independent variables | Between two different drugs, which one offers the best relief on two random, distinct groups of a population |

| Wilcoxon Sign-Rank Test | Two related variables | Between two different drugs, which one offers the best relief on the same group of patients |

| Friedman Test | Three metric or ordinal variables (has to be either metric or ordinal) | Three different ad ratings given by individuals in the same population |

How to Perform Statistical Tests

There are several assumptions about the data you are using that are tied to each statistical test discussed. In order for the tests to run, be predictive and accurate, these assumptions must be held. Because the assumptions for different types of tests can be different, it is imperative to check them before you start to model your data.

The most common programs used for statistical analysis are:

- Excel

- Stata

- SAS

- SPSS

- Python

- R

If you are running tests for parametric data, there are four main assumption checks that your data will have to pass. However, it should be noted that each test has it's own different set of assumptions that should be checked beforehand, and that this list is simply the ones you will come across most often.

| Assumption | Description |

|---|---|

| Independence | The groups that make up the sample are independent of eachother. |

| Normality | The data in the set is are normal, meaning that there it follows a normal distribution. |

| Homogeneity of variance | If there are multiple groups in the data relating to your independent variable, they have the same variance. |

If you're looking for some extra help on these introductory subjects, there are many online resources you can use to build your skills. Tutoring websites like Superprof, or online webinar courses from R-bloggers can help you get started on crunching some numbers.

Check out this data science course here.

Summarize with AI: